|

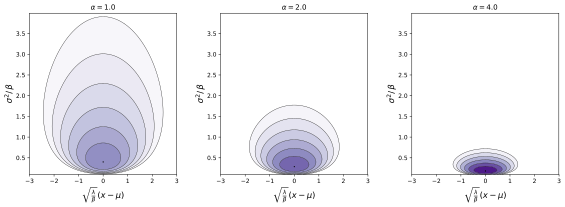

Probability density function  | |||

| Parameters |

location (real) (real) (real) (real) | ||

|---|---|---|---|

| Support | |||

| Mean |

| ||

| Mode |

| ||

| Variance |

, for | ||

In probability theory and statistics, the normal-inverse-gamma distribution (or Gaussian-inverse-gamma distribution) is a four-parameter family of multivariate continuous probability distributions. It is the conjugate prior of a normal distribution with unknown mean and variance.

Definition[edit]

Suppose

has a normal distribution with mean and variance , where

has an inverse-gamma distribution. Then has a normal-inverse-gamma distribution, denoted as

( is also used instead of )

The normal-inverse-Wishart distribution is a generalization of the normal-inverse-gamma distribution that is defined over multivariate random variables.

Characterization[edit]

Probability density function[edit]

For the multivariate form where is a random vector,

where is the determinant of the matrix . Note how this last equation reduces to the first form if so that are scalars.

Alternative parameterization[edit]

It is also possible to let in which case the pdf becomes

In the multivariate form, the corresponding change would be to regard the covariance matrix instead of its inverse as a parameter.

Cumulative distribution function[edit]

Properties[edit]

Marginal distributions[edit]

Given as above, by itself follows an inverse gamma distribution:

while follows a t distribution with degrees of freedom.[1]

For probability density function is

Marginal distribution over is

Except for normalization factor, expression under the integral coincides with Inverse-gamma distribution

with , , .

Since , and

Substituting this expression and factoring dependence on ,

Shape of generalized Student's t-distribution is

.

Marginal distribution follows t-distribution with degrees of freedom

.

In the multivariate case, the marginal distribution of is a multivariate t distribution:

Summation[edit]

Scaling[edit]

Suppose

Then for ,

Proof: To prove this let and fix . Defining , observe that the PDF of the random variable evaluated at is given by times the PDF of a random variable evaluated at . Hence the PDF of evaluated at is given by :

The right hand expression is the PDF for a random variable evaluated at , which completes the proof.

Exponential family[edit]

Normal-inverse-gamma distributions form an exponential family with natural parameters , , , and and sufficient statistics , , , and .

Information entropy[edit]

Kullback–Leibler divergence[edit]

Measures difference between two distributions.

Maximum likelihood estimation[edit]

Posterior distribution of the parameters[edit]

See the articles on normal-gamma distribution and conjugate prior.

Interpretation of the parameters[edit]

See the articles on normal-gamma distribution and conjugate prior.

Generating normal-inverse-gamma random variates[edit]

Generation of random variates is straightforward:

- Sample from an inverse gamma distribution with parameters and

- Sample from a normal distribution with mean and variance

Related distributions[edit]

- The normal-gamma distribution is the same distribution parameterized by precision rather than variance

- A generalization of this distribution which allows for a multivariate mean and a completely unknown positive-definite covariance matrix (whereas in the multivariate inverse-gamma distribution the covariance matrix is regarded as known up to the scale factor ) is the normal-inverse-Wishart distribution

See also[edit]

References[edit]

- ^ Ramírez-Hassan, Andrés. 4.2 Conjugate prior to exponential family | Introduction to Bayesian Econometrics.

![{\displaystyle \operatorname {E} [x]=\mu }](https://wikimedia.org/api/rest_v1/media/math/render/svg/d60f5921cca1c75d673eb70db395bf3a88f9170f)

![{\displaystyle \operatorname {E} [\sigma ^{2}]={\frac {\beta }{\alpha -1}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/b74baba053fd81d56d62de618558ac7af62ade55)

![{\displaystyle \operatorname {Var} [x]={\frac {\beta }{(\alpha -1)\lambda }}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c11eddb529a936912263edfb0c46ce2a42adfbd5)

![{\displaystyle \operatorname {Var} [\sigma ^{2}]={\frac {\beta ^{2}}{(\alpha -1)^{2}(\alpha -2)}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4d089f3b7da4ce1f13940b4731eb531932850d0e)

![{\displaystyle \operatorname {Cov} [x,\sigma ^{2}]=0}](https://wikimedia.org/api/rest_v1/media/math/render/svg/df7006f5738ee174c6c35e1694f1c4ac3b2c9c42)